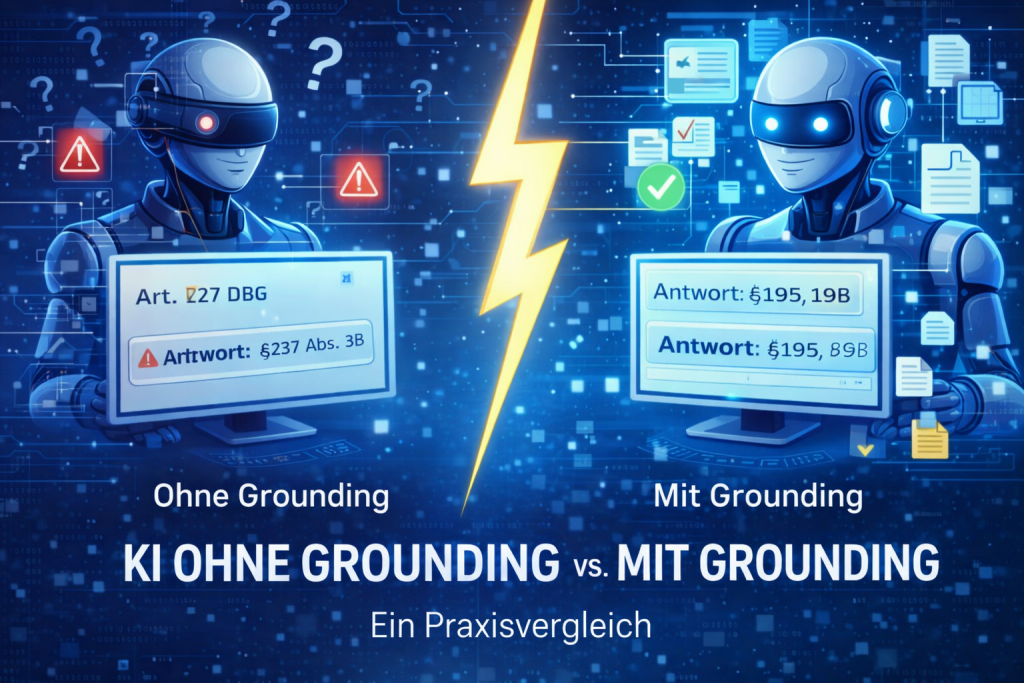

The theory is clear: AI makes mistakes in legal contexts. But how significant is the difference in practice?

The answer: the difference is not marginal—it is structural. In the following, we illustrate with concrete examples how AI behaves without grounding versus with grounding.

The basis for this difference lies in the concept of grounding.

What does “without” and “with” grounding mean?

Definition:

Grounding means that an AI system does not rely solely on its training data, but actively incorporates verified external sources into its responses.

The distinction is therefore clear:

AI without grounding generates content based on probabilities.

AI with grounding provides answers based on concrete, verified sources.

Example 1: GDPR Data Subject Access Request

Without grounding

“Companies must respond to access requests within 30 days. In certain cases, this period can be extended.”

Problem:

- no specific legal provision

- no contextual classification

- no justification

With grounding

“Pursuant to Art. 12(3) GDPR, a company must generally respond to a data subject access request within one month.

This period may be extended by a further two months where necessary, taking into account the complexity and number of requests.”

Advantage:

- clear legal basis

- correct deadline

- transparent reasoning

Result:

Only with grounding are legal statements verifiable and substantiated.

Why AI produces incorrect answers in the first place is explained here in detail.

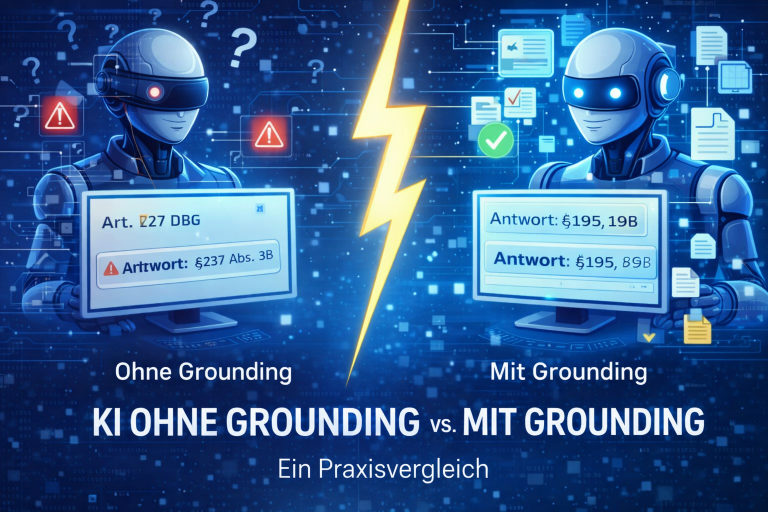

Example 2: Limitation Periods for Data Protection Violations

Without grounding

“The limitation period for data protection violations is generally three years.”

Problem:

- overly general statement

- no differentiation

- no classification by type of claim

With grounding

“Claims for damages arising from data protection violations are generally subject to the limitation period under Sections 195 and 199 of the German Civil Code (BGB), which is three years.

The period begins at the end of the year in which the claim arose and the data subject became aware of the relevant circumstances.”

Advantage:

- specific legal provisions

- correct classification

- legally sound

Result:

Grounding enables accurate legal classification instead of broad, generalized statements.

Example 3: Liability Clause in Contracts

Without grounding

“A limitation of liability is generally permissible as long as it does not violate applicable law.”

Problem:

- too general

- no differentiation

- not usable for concrete assessment

With grounding

“A limitation of liability is generally permissible, but is subject to the constraints of Sections 307 et seq. of the German Civil Code (BGB).

In particular, clauses are invalid if they restrict essential contractual obligations or exclude liability for intent and gross negligence.”

Advantage:

- clear legal boundaries

- specific legal provisions

- directly applicable

Result:

With grounding, statements become practically actionable.

What the comparison shows

| Without grounding | With grounding |

| plausible statements | substantiated statements |

| no sources | clear legal basis |

| high verification effort | targeted verification |

| risk | reliability |

The difference is structural:

it is not about better phrasing, but about a fundamentally different way of generating answers.

Why this difference arises

The issue does not lie in the AI itself, but in its data foundation.

AI is based on:

- training data (past information)

- probabilities (no fact-checking)

What is missing:

- access to up-to-date, verified legal sources

- structured legal data

- traceable sources

How to implement grounding in practice

Reliable legal AI requires three components:

- Structured legal data foundation

(e.g. laws, case law, regulations) - Semantic search

(to correctly interpret and contextualize content) - Integration with the AI system

(e.g. via MCP or APIs)

Only then do responses gain real substance.

How companies implement grounding in practice

In practice, the main challenge lies in technical implementation.

What is required:

- access to structured legal sources

- semantic processing of this data

- an interface connecting it to the AI system

Example: PLANIT // LAWBSTER

PLANIT // LAWBSTER is a purpose-built MCP server that connects AI systems such as ChatGPT, Claude, or Microsoft Copilot with legal data, enabling grounding at a technical level.

This means:

- access to verified legal sources

- structured and semantic search

- traceable, source-based answers

Your existing AI is not replaced, but made reliable and controllable.

What this means for companies

Companies face a clear decision:

- AI without grounding → fast, but error-proneAI with grounding → fast and reliable The difference lies not in the tool, but in the architecture.

In short

- AI without grounding is fast, but unreliable

- AI with grounding delivers verifiable, substantiated answers

- the key factor is the data foundation and how it is connected

Only with grounding does AI become truly usable in legal contexts.

How this integration works technically is explained here.

Would you like to see the difference for yourself?

Test PLANIT // LAWBSTER free for 14 days.

Frequently Asked Questions (FAQ)

What is the difference between AI with and without grounding?

AI without grounding generates answers based on probabilities, whereas AI with grounding accesses verified sources and therefore enables reliable, substantiated responses.

Why does ChatGPT make mistakes in legal contexts?

Because it does not verify real sources, but generates text based on probabilities.

Is a good prompt sufficient?

No. Without a reliable data foundation, AI remains error-prone.

How can AI become reliable in legal contexts?

By connecting it to structured, verified legal data.