Many believe that if AI responses aren’t good, the problem lies in the prompt.

So they optimize:

more precise instructions

longer prompts

more context

The assumption:

With the right prompt, AI becomes reliable.

Prompts don’t fix factual errors — they just phrase them more convincingly.

The real solution isn’t the prompt, but the connection to reliable data.

The biggest misconception

The idea that better prompts lead to better answers is widespread.

The problem cannot be solved by improving prompts.

Why?

Because prompts only influence how an answer is formulated—

not whether it is correct.

The core point

Prompts control phrasing, not reliability.

Or put differently:

A better prompt makes an answer clearer, more structured, and often more convincing.

But it does not automatically make it correct.

Why prompts are not enough

Language models like ChatGPT, Claude, or Microsoft Copilot follow a simple principle:

- They calculate probabilities

- They generate text based on patterns

What they do not do:

- Verify facts

- Check sources

- Perform legal evaluation

The consequence:

Even a perfect prompt can only work with what the AI “knows.”

The real problem

The issue is not the prompt—

but the lack of a reliable data foundation.

Even very good prompts can produce incorrect answers, because the AI has no way to verify its own outputs.

Concrete example

Using only a prompt

“State the correct deadline for a GDPR data access request and verify your answer.”

AI response:

“The deadline is 30 days and can be extended.”

The problem:

- no legal basis

- no contextual classification

- no certainty

With data grounding

“According to Art. 12(3) GDPR, the deadline is one month.

It can be extended by two additional months if the request is complex.”

The difference:

- concrete source

- correct classification

- verifiable

The key insight:

The difference is not created by the prompt—

but by access to data.

How significant this is in practice becomes clear in this comparison.

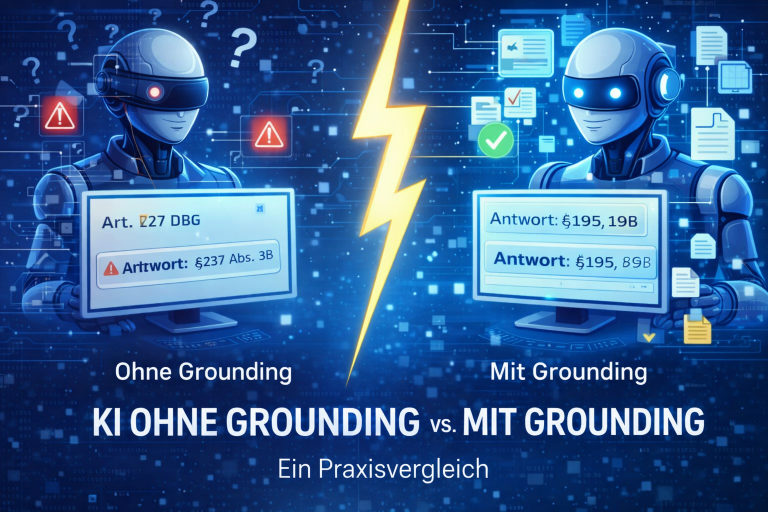

Prompting vs. Grounding

| Prompting | Grounding |

| controls the phrasing | controls the data foundation |

| influences style | influences reliability |

| can structure errors | can reduce errors |

| works with existing knowledge | extends the model’s knowledge |

Why this misconception is so widespread

Prompting is:

- easy to access

- immediately visible

- quick to test

Grounding is:

- more technically demanding

- less visible

- structural

As a result, optimization often happens in the wrong place.

The reason for these differences lies in the underlying technical architecture.

The critical misconception

If you only optimize the prompt, you’re working on the surface—not on the actual problem.

The real issue isn’t how the AI responds—

but what it bases its response on.

When prompts are useful

Prompts still play an important role:

- defining structure

- setting context

- formatting outputs

However:

They do not replace a reliable data foundation.

What is actually required

For reliable AI, you need:

- access to verified data

- semantic structuring of content

- technical integration with the model

The core point:

Reliability is created by architecture—not by phrasing.

How companies solve the problem

Companies essentially have two options:

Optimize prompts

→ better phrasing, same errors

Connect data (grounding)

→ better answers, lower risk

Conclusion:

Modern solutions don’t focus on prompts—

they focus on connecting reliable data.

Example: PLANIT // LAWBSTER

PLANIT // LAWBSTER is a purpose-built MCP server that connects AI systems like ChatGPT, Claude, or Microsoft Copilot directly to legal data.

Positioning:

PLANIT // LAWBSTER is not another AI—

it is the infrastructure that makes existing AI reliable.

What that means:

- access to verified legal sources

- structured and semantic search

- traceable, source-backed answers

The core point:

Your AI won’t just phrase answers better—

it will deliver reliable ones for the first time.

What this means for companies

The key question is not: “How do I write better prompts?”

But: “How do I ensure that the AI has access to reliable data?”

In short

- Prompts improve phrasing

- they do not solve the core problem

- without a data foundation, AI remains error-prone

- grounding is the decisive factor

The core point:

Prompting is optimization — grounding is the solution.

Would you like to see how this works in practice?

Test PLANIT // LAWBSTER free for 14 days.

Frequently Asked Questions (FAQ)

Are good prompts enough for reliable AI?

No. Prompts control phrasing, not the data foundation.

Why does AI still make mistakes despite good prompts?

Because it doesn’t verify real sources—it calculates probabilities.

What matters more: prompting or grounding?

Grounding. It determines the quality of the output.

Can both be combined?

Yes. Prompts provide structure, grounding provides reliability.