In short

- Grounding connects AI to verified data

- without grounding, AI remains error-prone

- with grounding, answers become reliable

Without grounding, AI cannot be used reliably in a legal context.

The reason lies in how language models work.

The central solution to this problem is: grounding.

Definition: What is grounding in AI?

Grounding is the prerequisite for AI to deliver reliable answers.

In concrete terms:

Grounding means that an AI does not rely solely on its training data, but actively incorporates verified external sources into its responses.

In short:

AI without grounding works with probabilities.

AI with grounding works with concrete, verifiable information.

Why is grounding necessary?

Language models like ChatGPT, Claude, or Microsoft Copilot work in fundamentally similar ways:

- they are trained on large datasets

- they calculate which word is statistically most likely to come next

What they do not do:

- check up-to-date information

- actively apply laws

- verify sources

The result:

- plausible but incorrect statements

- lack of traceability

- so-called hallucinations

The key point:

AI without grounding cannot produce reliable legal statements.

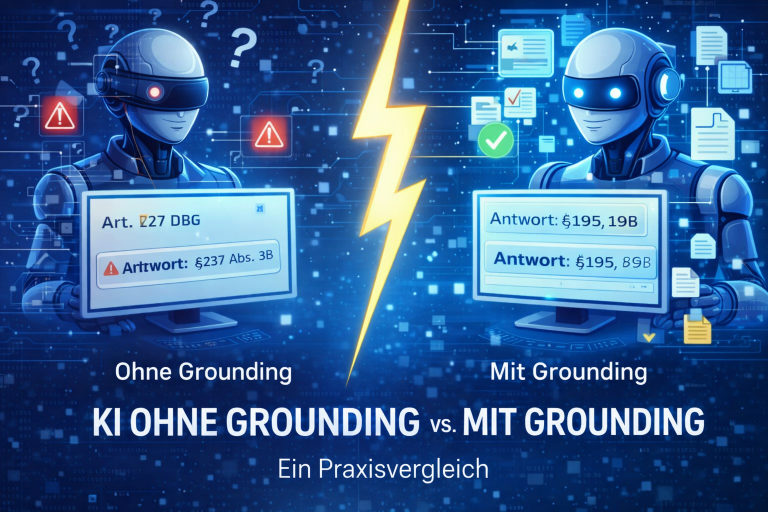

How significant the difference is in practice becomes clear in comparison.

What happens without grounding?

AI without grounding:

- generates text based on patterns

- may simplify or mix up information

- does not provide reliable references

Example:

An AI states a deadline or cites a legal paragraph—

but cannot ensure that it is correct or up to date.

What does grounding change in practice?

Grounding adds a critical capability: access to real, verified information.

This creates a fundamental difference:

| Without grounding | With grounding |

| probability-based answers | source-based answers |

| hallucinations possible | traceable statements |

| no evidence | verifiable sources |

| uncertainty | reliability |

Concrete example: AI without vs. with grounding

Without grounding:

“The deadline is 30 days.”

With grounding:

“According to Art. 12(3) GDPR, the deadline is one month.

It can be extended by two additional months if the request is complex.”

The takeaway:

With grounding, statements are not only plausible—but verifiable and reliable.

How does grounding work technically?

For grounding to work, three components are required:

- structured data foundation

(e.g. laws, case law, regulations) - semantic search

(to correctly interpret and retrieve relevant content) - integration with the AI

(e.g. via MCP or APIs)

The core point:

Grounding is not a prompt issue—it’s an architectural one.

Grounding vs. prompting — what’s the difference?

Many try to solve AI issues by improving prompts.

That is not sufficient.

The answer:

- Prompting controls the phrasing

- Grounding controls the data foundation

Clear distinction:

Prompting does not replace data integration.

Many attempt to fix the problem with prompts—this is why that approach falls short.

Why is grounding especially important in a legal context?

In law, plausible statements are not sufficient.

What is required:

- correct legal provisions

- up-to-date legal status

- traceable reasoning

The core point:

Only with grounding can AI be used reliably in a legal context.

How companies implement grounding in practice

In reality, the challenge is not understanding the concept—but executing it.

What is required:

- structured legal sources

- semantic preparation of content

- a technical interface to the AI

How grounding is implemented technically is explained here.

Example: PLANIT // LAWBSTER

PLANIT // LAWBSTER is a purpose-built MCP server that connects AI systems like ChatGPT, Claude, or Microsoft Copilot directly to legal data and implements grounding at a technical level.

Positioning:

PLANIT // LAWBSTER is not another AI—it is the infrastructure that makes existing AI reliable. It represents a new category of solutions that operationalize grounding in a legal context.

What that means:

- access to verified legal content

- structured and semantic search

- traceable, source-backed answers

The core point:

Your AI is not replaced—it is made reliable and controllable for the first time.

What does grounding mean for companies?

Companies face a clear choice:

- AI without grounding → fast, but error-prone

- AI with grounding → fast and reliable

The core point:

The difference is not the tool—it’s the architecture.

In short

- Grounding connects AI to verified data sources

- without grounding, AI remains error-prone

- with grounding, answers become reliable and evidence-based

The answer is:

Grounding is a prerequisite for using AI effectively in a professional context.

Would you like to see grounding in practice?

Test PLANIT // LAWBSTER free for 14 days.

Frequently Asked Questions (FAQ)

What is grounding in AI (simple explanation)?

Grounding means that an AI accesses external, verified sources to produce reliable answers.

Why is grounding important?

Because without grounding, AI does not verify real data and therefore remains error-prone.

Is prompting enough?

No. Prompts control phrasing, not the data foundation.

How is grounding implemented?

By combining a structured data foundation, semantic search, and technical integration (e.g., MCP).

Can grounding be added later?

Yes. Existing AI systems can be connected to data sources via interfaces.